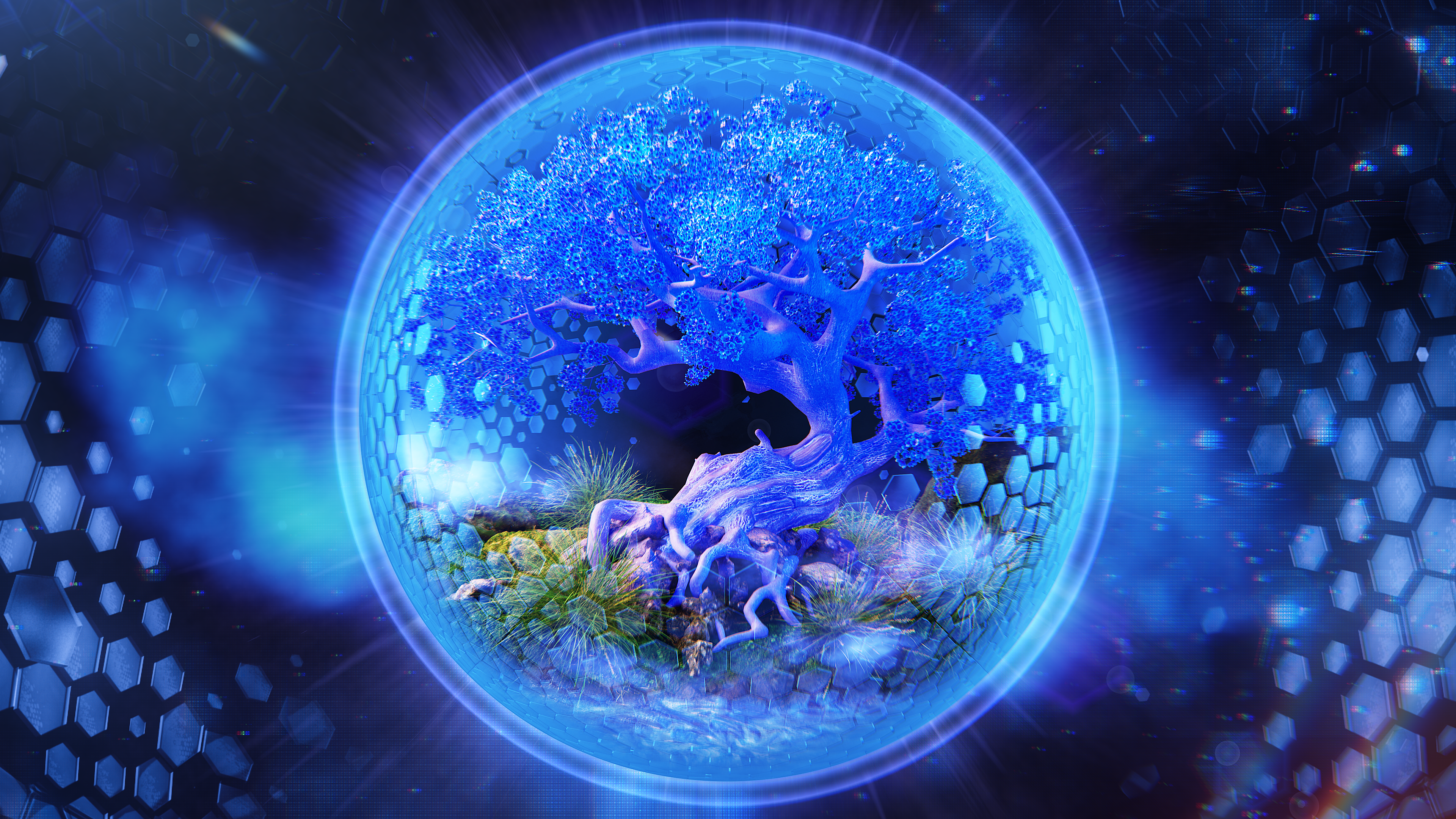

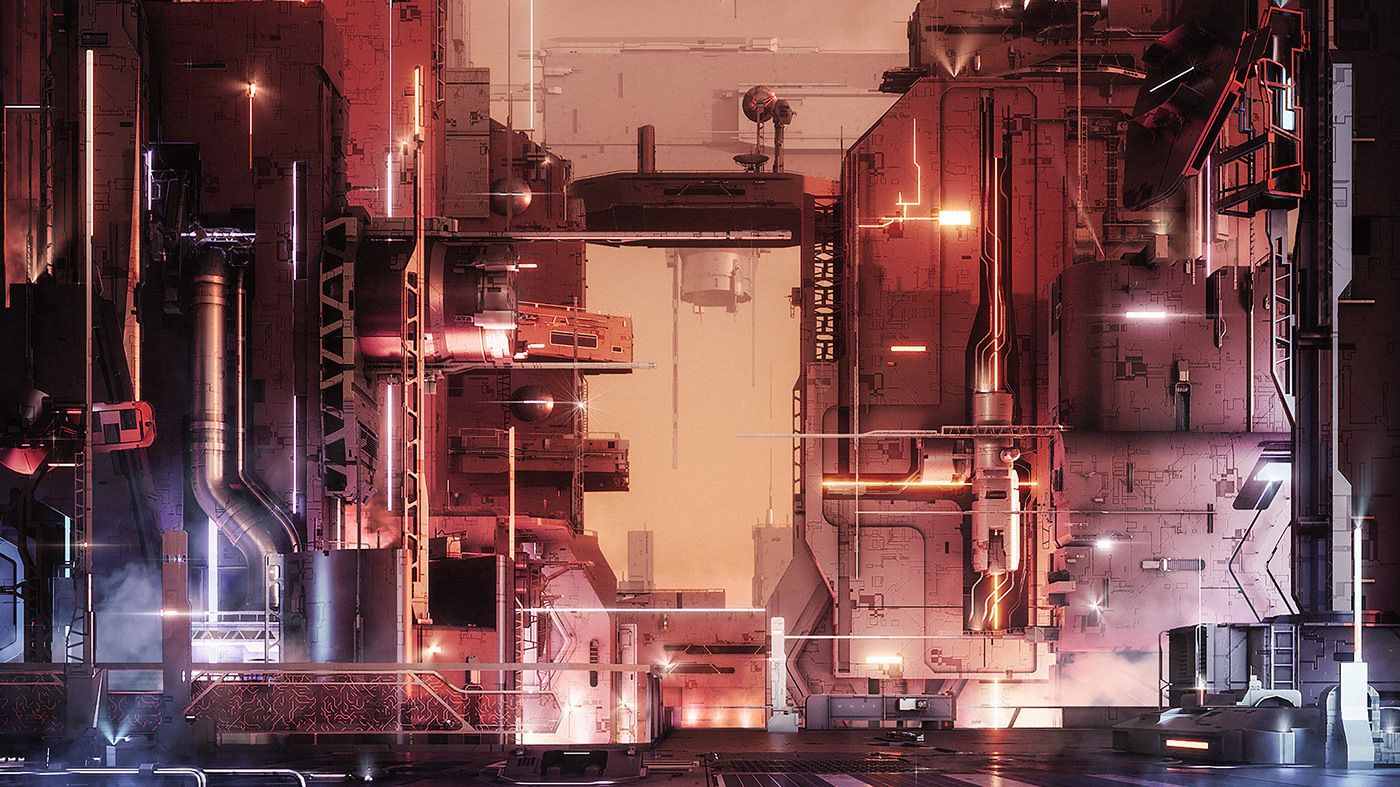

Richards design concept tied together elements from the individual artist’s into a larger visual narrative: “Different sci-fi tropes and pop culture references are a common theme of all Music First artists”. For the festival, Richard wanted to create a giant simulation that the audience could take part in. He envisioned a Skynet like mesh of code with a veiled robot AI manipulating reality from a central core. The ‘Core Stage’ would be a brutalist looking supercomputer built of LED screens.

Richard decided early on to build as much of the content as possible in real-time with Notch: “Producing 8 audio-visual intros plus 7 hours of show content, I didn’t have time for a traditional rendering workflow. Most of the 3D work was completed in Notch in a procedural manner, while 2D elements were done with asset bashing and real-time fx within Resolume”.

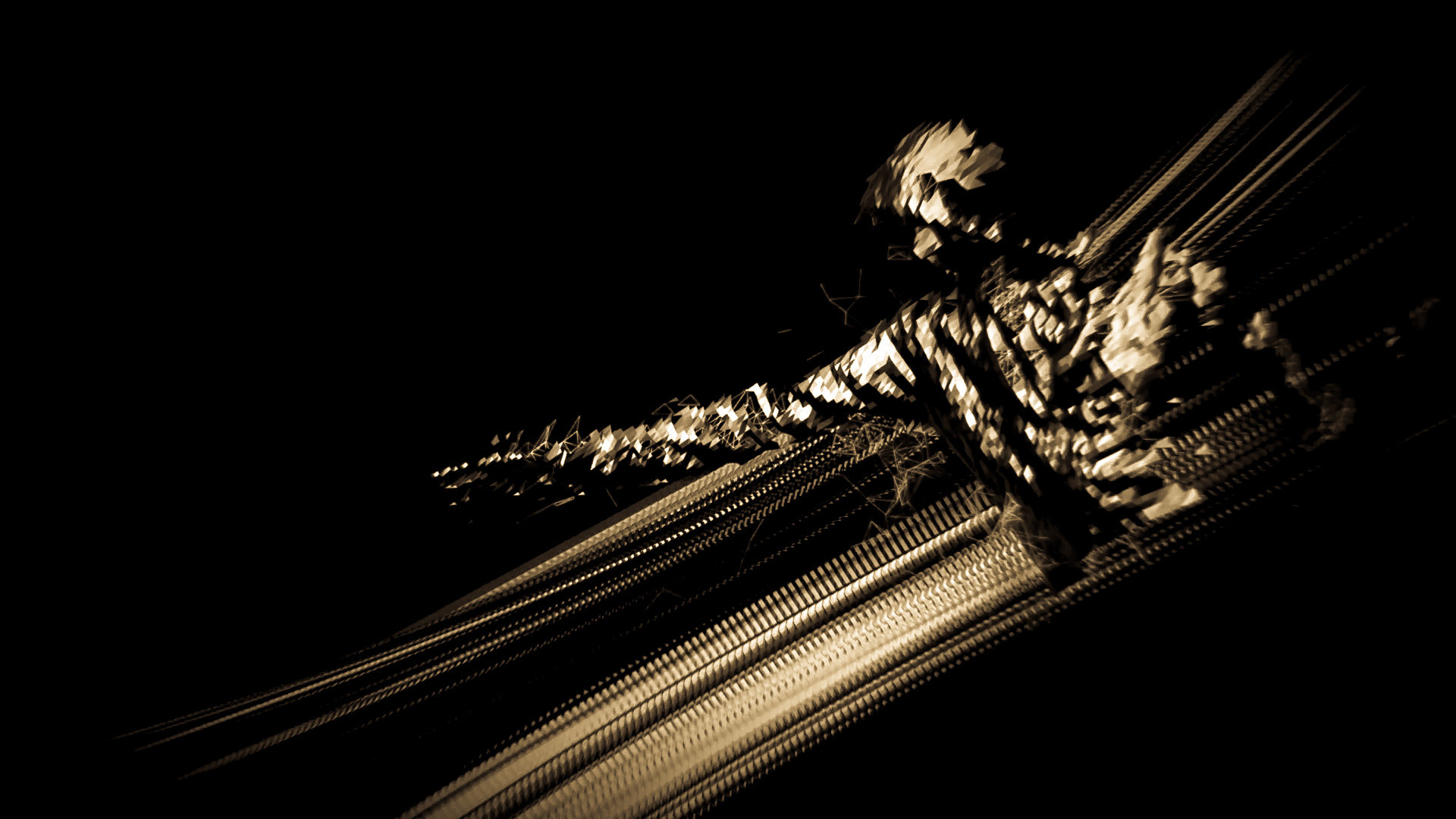

To create the audio-visual artist intros, Richard used a high-poly model which he retopoligized, UV mapped and rigged within 3DS Max to create a basic idle animation. The model was then exported to Notch via FBX where it was combined with other 3D assets. Richard textured the assets using Notch materials and applied secondary animation using math modifiers. Finally, the scenes were lit using Notch area lights combined with HDR reflections. For the audio element of the animation, Richard took stems from a track designed by Roger Ye of Sensualise. The audio stems were used to drive the characters eyes (using texture and glow animations), camera shake and post effect digital glitches: “Audio driven animations allowed me to swap out audio for each new animation, drastically reducing manual keyframing”.

Notch was used as a pre-visualisation aid throughout the programming of the show. Richard created a preview system utilizing NDI output from Resolume. Using the NDI stream displayed on a UV mapped 3D model of the stage, Richard was able to get a clear idea of what his design would look like live: “Being able to visualise your final output without the burden of multi-application intermediate steps allows for quick iteration and design refinement”.

The final result was eight segments of incredibly polished content. Each segment had its own personality, representing the artist performing. The astoundingly life-like AI animation that introduced each act made a real impression: “The audience reaction was hugely positive. I was surprised by the number of people that contacted me via Facebook and Instagram directly just to comment on how much they loved the visual show. It’s definitely a career highlight”.

Creative direction, motion design and creative coding. Live show, vision playback systems and VJ operation.

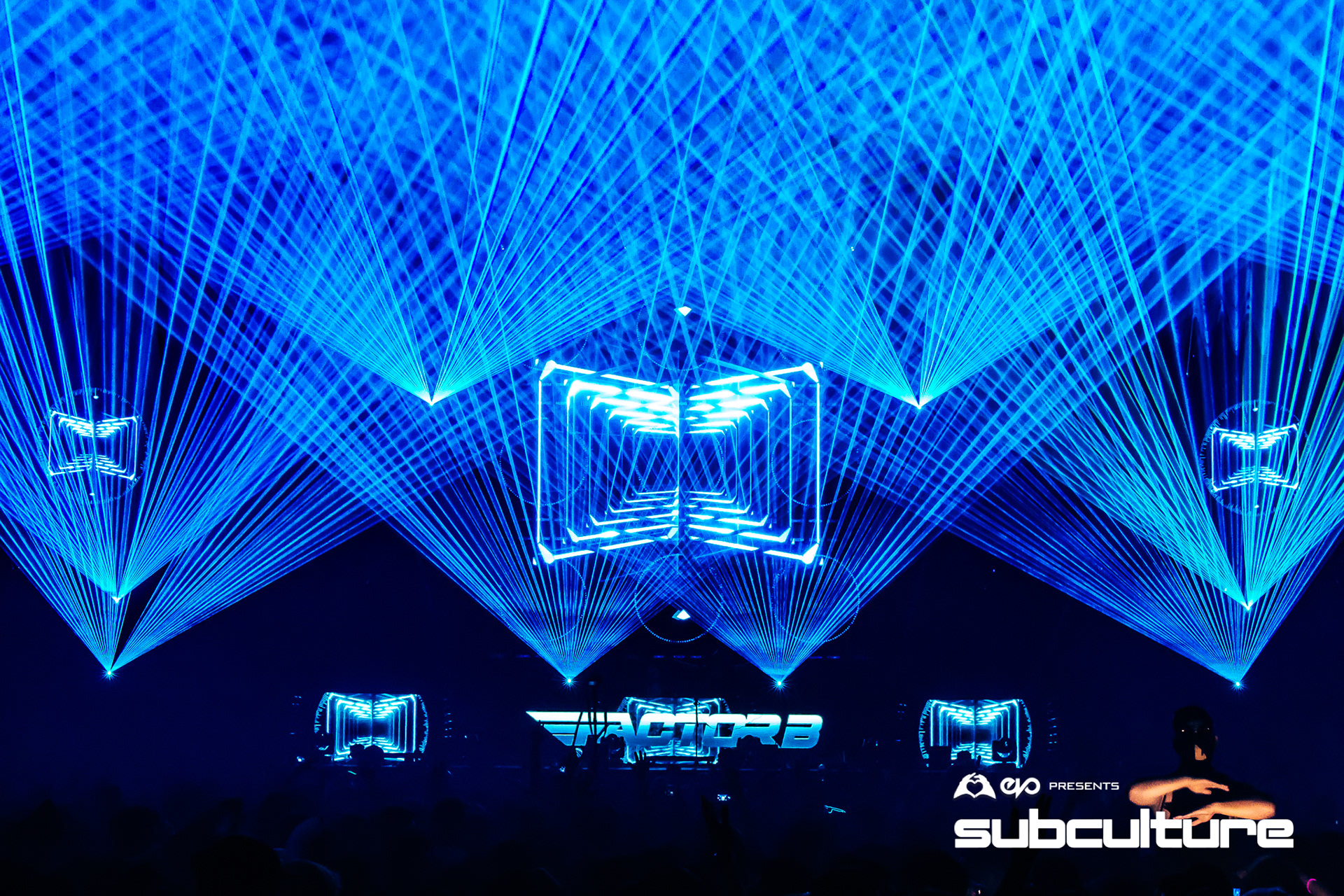

Subculture Festival is an event showcasing various electronic artists under Dublin based label Music First. Creative Director of MNVR, Richard De Souza utilised Notch’s real-time workflow to create an entire visual package for the event.

Photography by Nathan Doran